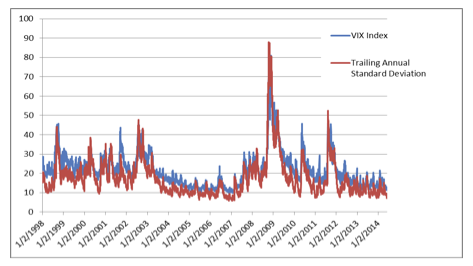

As the VIX tumbles to lows not seen since before 2008, we must ponder the meaning of this complete disappearance of volatility. Are we really witnessing historically low levels of risk?

The discussion of questions that this little paragraph appears to have so impressively and easily settled could have filled volumes, and the empirical results appeared solid enough for widespread acceptance. Why were the results so good, given what we now know about the performance of the CAPM based risk models? We believe that the key reason for acceptance of Rosenberg’s paradigm and its MPT foundations was the particular sample that he used for testing, from 1954 to 1970. This happened to be one of the most tranquil periods in the history of stocks; the standard deviation of the daily returns of S&P 500 over this period was meager—around .66 percent (annualized volatility of 10.46 percent)—while the same daily standard deviation since that time (1970-2010) was much higher at 1.08 percent (annualized volatility of 17.1 percent). The standard deviation of the S&P 500 from 1928 to 1954 was even higher at 1.47 percent (annualized volatility of 23.2 percent).

Another and perhaps more illuminating way of looking at this issue is to count the number of days when the S&P 500 was down more than 3.5 percent. For the 16-year period between 1954 and 1970, the number of days with a return below 3.5 percent was only 3! For the prior 16 years, between 1938 and 1954, the number of such days was 24, eight times higher. Meanwhile, the 16 years between 1994 and 2010 have produced 35 such days. Rosenberg was not the only one who developed his ideas during a period of stable economic growth and absence of major systemic risks.

As the late investor and author Peter Bernstein (2007), himself an ardent proponent of efficient markets, notes, all of the key pillars of Modern Portfolio Theory were put in place between 1951 and 1973. Surely, in such a calm environment as 1950’s and 1960’s, the financial markets may appear truly efficient and recent historical returns are really all you need to forecast risk. Thus, much of the groundwork of the MPT risk modeling was laid during a period of unusually low risks and this fact has to be kept in mind by anyone wanting to understand the roots of the problem.

Efficient Markets

The markets are not efficient, but they are random to a large degree. Which still leaves room for some exceptional traders to forecast and outperform, but makes it really difficult for most of us.

How do we measure risk and present it to clients given what we said above about accuracy of Sharpe Ratio, VIX and Confidence Range? Focus on creating plausible scenarios. There are a variety of tools that can be employed for that purpose, from simple qualitative analysis to quantitative stress testing. We will discuss scenario analysis in the next installment.

Daniel Satchkov is president of RiXtrema, a portfolio crash-testing company that helps advisors to discuss risk with clients. He frequently writes for popular and academic publications.

Forward Looking Risk?

VIX is supposed to be a measure of forward-looking volatility. It is based on decisions made by option traders from big investment houses with a lot of money on the line and that is quintessential "smart money," isn’t it? In fact, their opinion of the future seems to look a lot like the recent past. Let’s consider the below chart, which shows VIX and exponentially weighted annualized standard deviation (decay weight of .9). So, traders do not have any more collective knowledge of the future than pharmacists, truck drivers or astrologists (though traders and astrologers get paid for their predictions).

So, VIX is really just a rear-view measure. What about Value-at-Risk, Sharpe Ratio, Standard Deviation, Confidence Intervals, etc.? Unfortunately, they are no different from VIX in that regard. All of those measures are simply based on past volatility over some historical period. How did this come about?

The Old Paradigm

The answer lies in neo-classical economics and Modern Portfolio Theory (MPT). Both are thoroughly embedded in the physics-inspired view of the financial economy as a stable and an equilibrium-seeking system. In such a view, if some changes do occur in the financial markets, those changes present no discontinuities and the model has ample time to react by slowly adjusting risk forecasts as the volatility rises. As almost everybody in the world by now knows, currently accepted risk models have time and again shown their inability to deal with financial market reality. Frequent talk of "hundred-year floods" and of "rise in correlations" not only suggests frequent failures of a theory, but also the inability of the theory to learn from past mistakes by incorporating new data. The crash of 2008, completely unforeseen by all traditional risk systems, should serve as the final wake-up call to re-examine the foundations of the old paradigm and consider how sound they really are.

MPT started with the work of Harry Markowitz and is based on a number of fairly elaborate assumptions regarding financial markets. MPT’s dominance of portfolio management and reporting resembles the use of Newtonian mechanics in building automobiles. Not surprisingly, MPT comes under detailed scrutiny every time a supposed once-in-a-lifetime event occurs in the financial markets. To get at the roots of the problem, we must go back to Barr Rosenberg (1973), the father of all present day risk modeling. In his significantly titled Prediction of Systemic and Specific Risk in Common Stocks paper, he started with these momentous words: “Ex Ante predictions of the riskiness of common stocks – or, in more general terms, predictions of probability of returns can be based on fundamental (accounting) data for the firm and also on the previous history of stock prices.”

As an aside, I am always amused by the ability of the proponents of the Efficient Markets Hypothesis to change the meaning of their theory in response to evidence that invalidates it. When a major bubble occurs and is followed by a crash, they manage to hold on and declare that their theory is quite intact. After “efficient” market prices swing as they did between 2008 and 2014, they simply re-define efficiency. For example, they will claim that the concept of efficient markets simply means that markets are hard to beat. Really?! That observation is all it takes to win a Nobel prize? To believe that the word efficient can mean the same as random is to perform feats managed hitherto only by Lewis Carrol’s Humpty Dumpty:

"There's glory for you!"

"I don't know what you mean by 'glory,'" Alice said.

Humpty Dumpty smiled contemptuously. "Of course you don't — till I tell you. I meant 'there's a nice knock-down argument for you!"'

"But 'glory' doesn't mean 'a nice knock-down argument'," Alice objected.

"When I use a word," Humpty Dumpty said, in rather a scornful tone, "it means just what I choose it to mean — neither more nor less."

Risk Management For Advisors

What are the practical implications for advisors? What is the best way to respond to client concerns about risk? Is inflation on the horizon or will the markets crash or maybe are we firmly in a recovery territory? How to discuss all these issues with your clients? Simple rules:

Black Swans Love A Low VIX

June 11, 2014

« Previous Article

| Next Article »

Login in order to post a comment