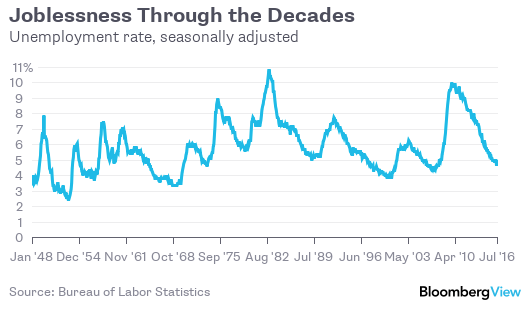

The U.S. unemployment rate was 4.9 percent in July, according to the Bureau of Labor Statistics. That’s the same as in July 1997. Does this mean the labor market is as healthy now as it was then, in the early days of the late-1990s boom? No, it doesn’t.

The broader U-6 unemployment measure, for example, which includes discouraged workers and involuntary part-timers, was still at 9.7 percent in July, compared with 8.6 percent in July 1997. The job growth reported by employers was, at 1.7 percent over the past 12 months, well short of the 2.6 percent pace of July 1997. And most discouragingly, the share of Americans 16 and older who were in the labor force (that is, either working or looking for a job) was just 62.8 percent, compared with 67.2 percent in 1997.

These are all good reasons to take the headline unemployment number with a grain of salt, and to question claims that happy days are here again. The unemployment rate is a problematic measure, especially when used for historical comparison.

Does this mean that the unemployment rate is some sort of “big lie” or “hoax,” charges that seem to be coming up ever more frequently? Well, if the unemployment rate is a hoax, it’s quite the long-running one. Yes, there have been modest shifts through the decades in how unemployment is defined, the last ones in 1994. But if the rate seems less useful now than it once was, that probably has far more to do with changes in the economy and society than with anything the economic statistics-gatherers have done.

One of central questions in measuring unemployment has been how to divide those who would work if work were available from those who shouldn’t be considered part of the labor force. One can just ignore the question, and look instead to the employment-to-population ratio -- a statistic that seems to be getting more attention lately (in my columns, at least). But policy-makers and number-crunchers have for various reasons always wanted a metric that excluded those who couldn’t or wouldn’t work.